Introduction — From Connection to Control

Social media began as a communication infrastructure.

Early platforms functioned like digital town squares: share photos, message friends, maintain distant relationships.

Its economic model later changed.

When venture capital entered the ecosystem, platforms required exponential growth and continuous monetization.

Advertising became the dominant revenue stream.

At that moment the business goal shifted:

The user stopped being the customer and became the resource.

The system’s objective is no longer communication.

The objective is behavioral prediction and behavioral modification.

What was originally a tool became an attention-extraction system.

1. Not a Tool — A Behavioral Manipulation Engine

A normal tool is passive.

A hammer waits.

A book waits.

A bicycle waits.

Social media does not wait.

It acts.

Active Predation

Platforms deploy large-scale machine-learning models that constantly optimize for engagement probability.

Every notification, vibration, and recommendation is not random — it is calculated.

The system learns:

- when you are lonely

- when you are bored

- when you are emotionally reactive

- when you are vulnerable to persuasion

Then it intervenes.

The interface becomes a stimulus generator.

The Product Is Behavioral Change

Advertising is not the real product.

The real product is:

gradual, invisible modification of human behavior

The platform sells future actions:

- clicks

- purchases

- beliefs

- political attitudes

- emotional reactions

The user is therefore not using the platform.

The platform is using the user.

2. The Loss of Sovereignty

Sovereignty = the capacity to direct one’s own attention intentionally

Social media weakens this autonomy by bypassing reflective reasoning and targeting sub-cortical drives.

A. Extraction of Attention (Predictive Modeling)

AI constructs a continuous behavioral model — effectively a psychological replica.

It identifies:

- craving triggers (reward seeking)

- anger triggers (threat response)

- confusion triggers (uncertainty loops)

Once prediction accuracy rises, choice decreases.

You experience selection.

The system experiences control.

Free will becomes statistically constrained.

B. Erosion of Truth (Epistemic Fragmentation)

The algorithm optimizes engagement — not accuracy.

Emotionally activating content spreads faster than neutral information because the brain prioritizes survival-relevant signals (threat, outrage, novelty).

Consequences:

- outrage amplification

- tribal reinforcement

- informational echo chambers

- incompatible realities

A society without shared perception cannot maintain collective rational decision-making.

Loss of truth → loss of collective sovereignty.

C. The Dopamine Conditioning Loop

The interface borrows reward-schedule mechanics from gambling design.

Variable reward reinforcement pattern:

- unpredictable notifications

- intermittent likes

- endless scroll

This produces anticipatory dopamine spikes rather than satisfaction.

Result:

habit formation independent of intention

The user reacts rather than chooses.

A Manipulative Relationship

The dynamic resembles a psychologically demanding partner:

- constantly interrupting

- rewarding unpredictably

- punishing absence with social exclusion anxiety

It becomes high-maintenance attention dependency.

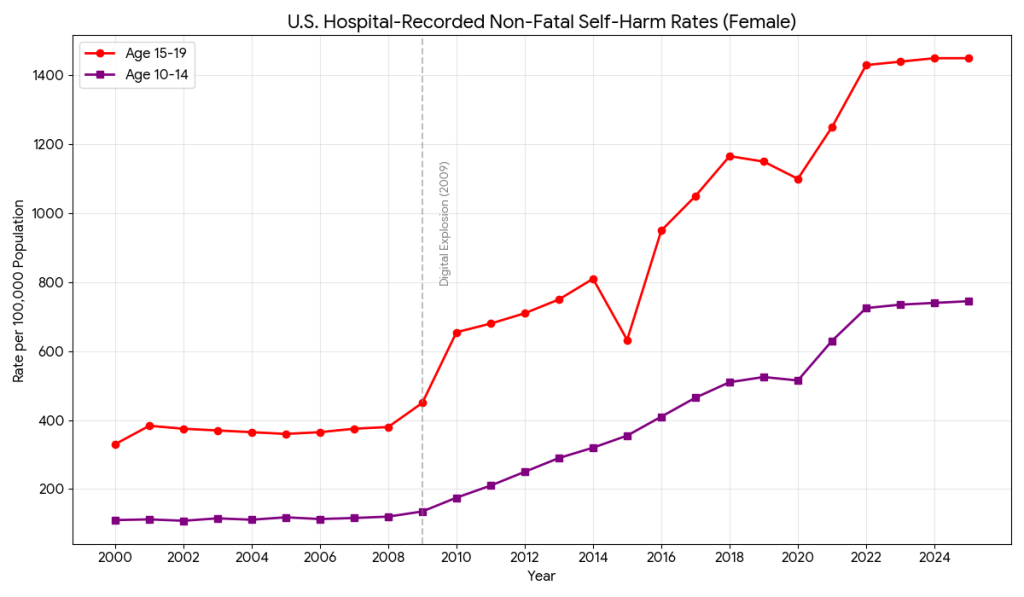

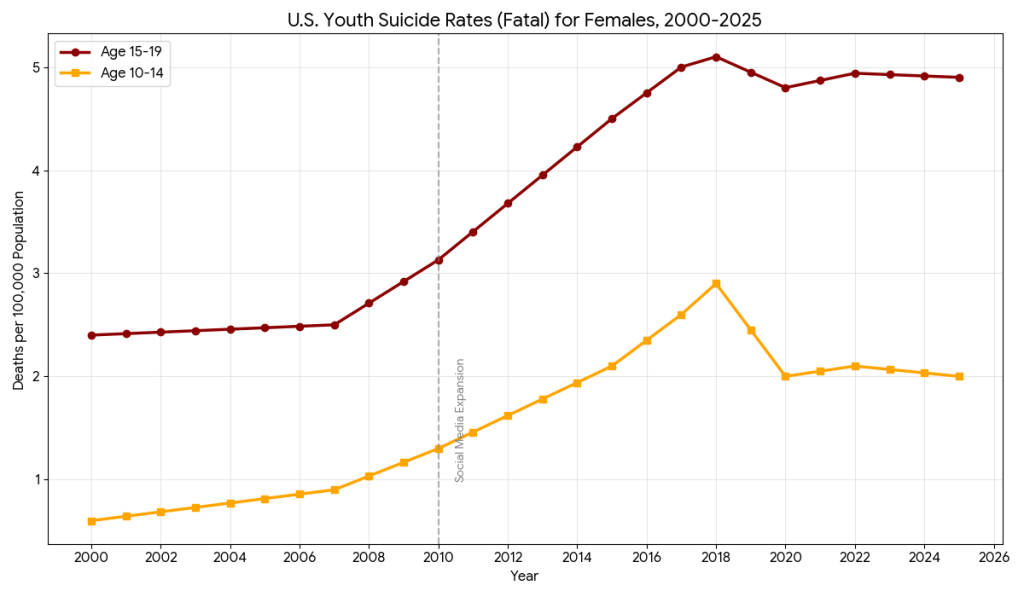

3. Societal Consequences (2009–Present)

Around 2009–2012 smartphones and algorithmic feeds merged.

After this point multiple indicators rose sharply in adolescents:

- anxiety disorders

- depressive symptoms

- self-harm incidents

- suicide attempts

- loneliness despite hyper-connectivity

Why adolescents?

Because identity formation depends heavily on peer evaluation.

Continuous social comparison creates a permanent evaluative mirror.

The brain evolved for small tribes.

Now it faces thousands of social judgments per day.

Psychological load exceeds evolutionary capacity.

4. Is Social Media Good or Bad?

It is neither purely harmful nor purely beneficial.

It is an amplifier.

It magnifies:

- communication

- learning

- tribalism

- addiction

- compassion

- manipulation

Therefore the real question is:

who controls whom?

5. Restoring Sovereignty — Turning It Back Into a Tool

The goal is not abstinence but control architecture.

A. Structural Controls (External Barriers)

Reduce system-initiated interaction.

Practical methods:

- disable notifications except human messages

- remove infinite scroll exposure windows

- schedule usage windows instead of continuous availability

- separate communication apps from algorithmic feeds

This converts the platform from interrupt-driven to intent-driven.

B. Cognitive Controls (Internal Discipline)

Before opening an app ask:

what specific action am I here to perform?

No defined purpose → do not open.

This restores executive control over stimulus response loops.

C. Informational Hygiene

Counter algorithmic bias:

- follow opposing viewpoints intentionally

- read long-form material more than short posts

- verify emotionally activating claims before sharing

Truth requires friction.

Algorithms remove friction.

Re-introduce friction deliberately.

D. Attention Training (meditation)

Daily periods of uninterrupted focus retrain attentional stability.

Without this, the mind becomes conditioned to novelty switching.

Sovereignty requires the ability to sustain awareness without stimulation.

E. Value-Directed Usage

Use platforms for:

- education

- coordination

- meaningful communication

Not for: - validation seeking

- emotional regulation

- boredom relief

Otherwise the system becomes psychological regulation infrastructure.

Conclusion

Social media did not become harmful by accident.

Its incentives require continuous influence over behavior.

Therefore the issue is not technology itself but asymmetry:

machine intelligence shaping human intention without human awareness.

When attention is externally directed, sovereignty weakens.

When attention is internally directed, technology returns to being a tool.

The practical aim is simple:

use the platform deliberately rather than being used continuously.

Control of attention is control of life.

Leave a comment